1.1

Defining osteoporosis

This chapter introduces the topic of osteoporosis from the perspective of the bone. Its purpose is to consider the definition of osteoporosis and to discuss the nature of osteoporotic bone, including the characteristics that affect its ability to resist fracture. Osteoporosis is a condition of generalized skeletal fragility in which bone strength is sufficiently weak that fractures occur with minimal trauma, often no more than is applied by routine daily activity. Albright and Reifenstein proposed in 1948 that primary osteoporosis consists of two separate entities, one related to menopausal estrogen loss and the other to aging. This concept was elaborated upon by Riggs et al. who suggested the terms “Type I osteoporosis,” to signify a loss of trabecular bone after menopause, and “Type II osteoporosis,” to represent a loss of cortical and trabecular bone in men and women as the end result of age-related bone loss. By this formulation the Type I disorder directly results from lack of endogenous estrogen, while Type II osteoporosis reflects the composite influences of long-term remodeling inefficiency, inadequacy of dietary calcium and vitamin D, along with altered intestinal mineral absorption, renal mineral handling, and parathyroid hormone (PTH) secretion. Although there may be heuristic value to defining subsets of patients in this manner, the model suffers by not accounting for the complex and multifactorial nature of a disease that defies rigid categorization.

Bone mass at any time in adult life reflects the peak investment in bone mineral at skeletal maturity minus that which has been subsequently lost. A woman who experienced interruption of menses, extended bed rest, eating disorder, or systemic illness during her adolescent growth years might enter adult life having failed to achieve the bone mass that would have been predicted from her genetic or constitutional profile. Also, some women might achieve their genetically determined peak bone mass, but the genetic factors might predict a low peak bone mass level. In both the cases, even with a normal rate of subsequent bone loss, her skeleton would still be in jeopardy simply due to the deficit in peak bone mass. Thus it seems most appropriate to consider osteoporosis the consequence of a stochastic process, that is, multiple genetic, physical, hormonal, and nutritional factors acting alone or in concert to diminish skeletal integrity.

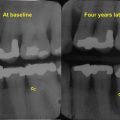

Historical artifacts show that characteristic deformities of vertebral osteoporosis were recognized in antiquity , although broad awareness of this condition has come about only during the past four decades. Unfortunately, because traditional radiographic techniques cannot distinguish osteoporosis until it is severe, confirmation of the diagnosis remained problematic until the 1980s, with introduction of single-photon absorptiometry and then dual-photon absorptiometry, initially, and followed by then dual-energy X-ray absorptiometry (DXA) that allowed quantitative assessment of bone mineral density (BMD). Prior to these BMD assessment technologies, diagnosis was by necessity clinical, requiring a history of one or more low-trauma fractures. Although highly specific, such a grossly insensitive diagnostic criterion offered no assistance to physicians who hope to identify and treat affected individuals who have not yet sustained a fracture.

The introduction of accurate noninvasive bone mass measurements afforded the opportunity to estimate a person’s fracture risk and to make an early diagnosis of osteoporosis. Briefly stated, large prospective studies showed that a reduction in BMD of one standard deviation from the mean value for a young, normal reference population confers a two- to threefold increase in long-term fracture risk . In a manner similar to that by which serum cholesterol concentration predicts risk for myocardial infarction or blood pressure predicts risk for stroke, BMD measurements can successfully identify subjects at increased risk of fracture and can help physicians select those individuals who will derive greatest benefit for initiation of therapy.

Several factors limit the ability of BMD measurements to predict an individual’s fracture risk with great accuracy. BMD is clearly related to body weight, yet routine clinical bone mass assessments may not be weight-adjusted. Various features of bone geometry that affect bone strength and fracture risk are not generally considered in the clinical interpretation of bone mass measurements, including bone size as well as the spatial distribution of bone mass. Moreover, bone mass determinations cannot distinguish individuals with low mass and intact microarchitecture from those with equal mass who have trabecular disruption and cortical porosity . Other factors, including age, bone turnover rate, and falling risk, are independent risk factors for fracture, at least in older subjects , and cannot be assessed by BMD measurement.

In 1994 a group of senior investigators in this field offered a working definition of osteoporosis based exclusively on bone mass . The reasoning behind this proposal, made on behalf of the World Health Organization (WHO), was that the clinical significance of osteoporosis lies exclusively in the occurrence of fracture, that BMD measurements predict long-term fracture risk, and that selection of rigorous diagnostic criteria would minimize the number of patients who are incorrectly diagnosed. The authors suggested that osteoporosis be diagnosed as a BMD value of 2.5 standard deviations below the average for healthy young adult women. Using this value, approximately 30% of postmenopausal women would be designated as osteoporotic, which gives a realistic projection of lifetime fracture rates. In addition, Kanis et al. proposed that BMD values of 1–2.5 standard deviations below the young adult men be designated as “osteopenic.” Such values identify individuals at increased risk for fracture, but for whom a diagnosis of osteoporosis would not be justified since it would mislabel far more individuals than would actually be expected ever to fracture. Of course, it would still be possible to make a clinical diagnosis of osteoporosis based on a prior fragility fracture.

This approach has proven useful for clinical management but has several limitations. The applicability of this criterion to young people prior to the completion of peak bone acquisition would be inappropriate. The BMD measurement is itself subject to several confounding factors, including bone size and degenerative changes, such as aortic calcification and osteophytes, that induce artifacts . As BMD correlations among skeletal sites are not strong, designating a person “normal” based on a single site, for example, the hip, necessarily overlooks individuals with low bone density elsewhere, such as the lumbar spine. It seems reasonable to suppose that adjustment of bone density readings for such factors as body size and bone geometry might improve the accuracy of this technique to identify individuals at highest risk for fracture. Finally, studies indicate that although individuals with low BMD are at greater relative risk of fracture, many fractures in the population are experienced by individuals with bone mass measurements in the normal to osteopenic range by WHO criteria . The FRAX tool has provided clinicians with improved ability to predict fracture risk in women and men over 40 years of age by combining BMD with clinical risk factors for fractures (see Chapter 66 —Leslie). Altogether, it should be evident that whereas the WHO guidelines provide an operational definition of osteoporosis to facilitate clinical diagnosis, the BMD-based guidelines are of limited use to investigators whose interest is the nature and causes of osteoporosis. Knowledge of a low bone density at a particular point in time offers no information regarding the adequacy of peak bone mass attained, the amount of bone that may have been lost, the rate of bone loss, or the quality of bone that remains.

1.2

Material and structural basis of skeletal fragility

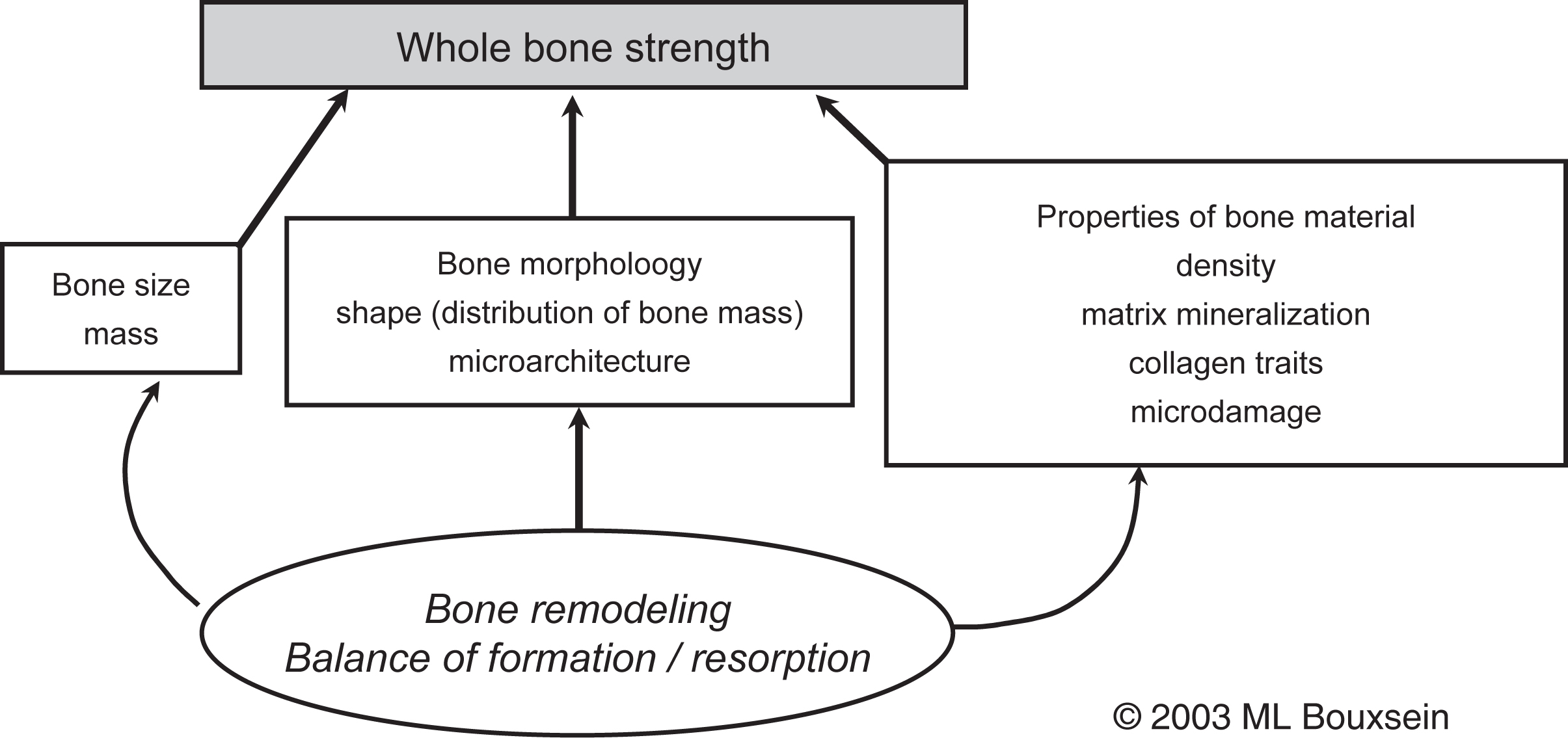

The need to understand the broader array of factors that influence skeletal fragility was supported by the 2001 Consensus Development Conference that defined osteoporosis as “a disease characterized by low bone strength, leading to enhanced bone fragility and a consequent increase in fracture risk” . This definition underscores the role of bone strength and implies that understanding bone strength is key to understanding fracture risk ( Fig. 1.1 ).

The enhanced fragility associated with osteoporotic fractures has been attributed to several factors, chief among them low bone mass and microarchitectural deterioration. Implicit in this view is that osteoporosis results from deficits in the amount and structure of bone, but that the residual bone is not, in contrast to osteomalacia, grossly undermineralized. However, some data challenge this view, indicating that subtle changes in bone matrix properties, such as the degree of mineralization and extent of collagen cross-linking, may also contribute to skeletal fragility .

For many years the prevailing view has been that osteoporosis develops through excessive loss of bone mass. Only recently has attention been drawn to abnormalities in bone acquisition during early life as a basis for subsequent bone fragility (see Chapter 36 —DeMeglio). This latter issue notwithstanding, the dominant model of osteoporosis among investigators in the field has, until recently, emphasized only the amount and, sometimes, the distribution of bone tissue. However, the great overlap in bone density between individuals with and without fracture indicates the limitations of such a model to account adequately for individual differences in fracture susceptibility. In other words, properties besides bone mass and BMD may contribute to skeletal fragility.

The ability of a bone to resist fracture (or “whole bone strength”) depends on the amount of bone (i.e., mass), its spatial distribution (i.e., shape and microarchitecture), and the intrinsic properties of the materials that comprise it (see Chapter 13 —Karim et al.). Bone remodeling, specifically the balance between formation and resorption, is the biologic process that mediates changes in the traits that influence bone strength. Thus diseases and drugs that have an impact on bone remodeling will influence bone’s resistance to fracture. Due to a combination of reduced bone mass and changes in the structural and material properties of bone, whole bone strength declines markedly with age. For instance, laboratory studies of human cadaveric specimens have shown that the strength of the proximal femur and vertebral body are 2- to 10-fold lower in older people than in young individuals .

In considering these determinants of bone strength, several important concepts must be kept in mind. First, unlike most engineering materials, bone continually adapts to changes in its mechanical and hormonal environment and is capable of self-renewing and repairing via the processes of modeling and remodeling (see Chapter 31 —Parfitt). Thus in response to increased mechanical loading, bone may adapt by altering its size, shape, matrix properties, or a combination of these. This type of adaptation is readily seen by the greater size of the bones in the dominant versus nondominant arm of tennis players . In addition, favorable changes in bone geometry may occur in response to deleterious changes in bone matrix properties. For example, in a mouse model of osteogenesis imperfecta, a defect in the collagen that leads to increased bone fragility can be compensated for by a favorable change in bone geometry to preserve whole bone strength . Thus the loss of bone strength with age likely reflects the ongoing skeletal response to changes in its hormonal (i.e., a decline in gonadal steroids) and mechanical environments (i.e., decreased physical activity).

A second important concept concerns the hierarchical nature of the factors that influence whole bone strength. Thus properties at the cellular, matrix, microarchitectural, and macroarchitectural levels may all impact bone mechanical properties . Importantly, though these various factors are interrelated, it cannot be expected that changes in a single property will be solely predictive of changes in bone mechanical behavior.

The strength of most bone is influenced by the mass and the structural and material properties of both trabecular and cortical bones. The mechanical behavior of trabecular bone is influenced by many factors; however, the strongest determinants are apparent density (or volume fraction, the fraction of total tissue volume actually occupied by bone) and the microstructural arrangement of the trabecular network. Sampled over a wide range of densities, the stiffness and strength of trabecular bone are related to density in a nonlinear fashion, such that the change in strength is disproportionate (i.e., greater) than the change in density . For example, a 25% decrease in density, approximately equivalent to 15 years of age-related bone loss, would be predicted to cause a 44% decrease in the stiffness and strength of trabecular bone. However, given the heterogeneous nature of trabecular bone, it is clear that density alone cannot explain all of the variation in trabecular bone mechanical properties. Both empirical observations and theoretical analyses indicate that trabecular microarchitecture plays an important role (see next).

The primary determinants of the biomechanical properties of cortical bone include porosity and the degree of mineralization of the bone matrix. Indeed, over 80% of the variation in cortical bone stiffness and strength is accounted for by a power–law relationship with mineralization and porosity as explanatory variables . Other properties that influence cortical bone mechanical behavior include, but are not limited to, its histologic structure (primary, lamellar vs osteonal bone), the collagen content and orientation of collagen fibers, extent and nature of collagen cross-linking, the number and composition of cement lines, and the presence of fatigue-induced microdamage . A few of the factors that influence both the structural and material behavior of bone will be briefly presented in the following sections.

1.2.1

Role of bone microarchitecture

Although bone density is among the strongest predictors of the mechanical behavior of trabecular bone, both empirical observations and theoretical analyses show that aspects of the trabecular microarchitecture influence trabecular bone strength as well . Trabecular architecture can be described by the number and shape of the trabecular elements and their orientation. The trabecular structure is generally characterized by the number of trabeculae in a given volume, their average thickness, the average distance between adjacent trabeculae, and the degree to which trabeculae are connected to each other, as well as the degree to which they are plate-like or rod-like. Previously, assessment of trabecular microarchitecture was possible only by two-dimensional histomorphometry. However, imaging modalities such as high-resolution microcomputed tomography, high resolution-peripheral quantitative computed tomography (HR-pQCT), and magnetic resonance imaging now allow for three-dimensional (3D) assessment of trabecular microstructure on excised bone specimens and in vivo at peripheral skeletal sites (see Chapter 64 —Biver). Such techniques have progressed to the point where it is now possible to assess individual trabecular plate and rod microstructure separately .

Laboratory studies have demonstrated moderate-to-strong correlations between trabecular bone architecture and biomechanical properties of trabecular bone . However, generally trabecular bone microarchitecture is strongly correlated with trabecular bone volume and therefore discerning the independent effects of specific architectural features on bone mechanical properties has proven challenging. Nonetheless, Ulrich et al. reported that the inclusion of indices of trabecular architecture, assessed by 3D microcomputed tomography, enhanced prediction of the biomechanical properties of human trabecular bone . Analytical studies have shown how specific changes in trabecular architecture may influence trabecular bone mechanical behavior . For example, an analytical model of vertebral trabecular bone showed that for the same decline in bone mass, loss of trabecular elements was two to five times more deleterious to bone strength than thinning of the trabecular struts, implying that maintaining connectivity of the trabecular network is critical . This finding may be explained by examining one potential mechanism by which individual trabecular elements may fail.

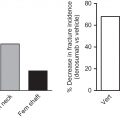

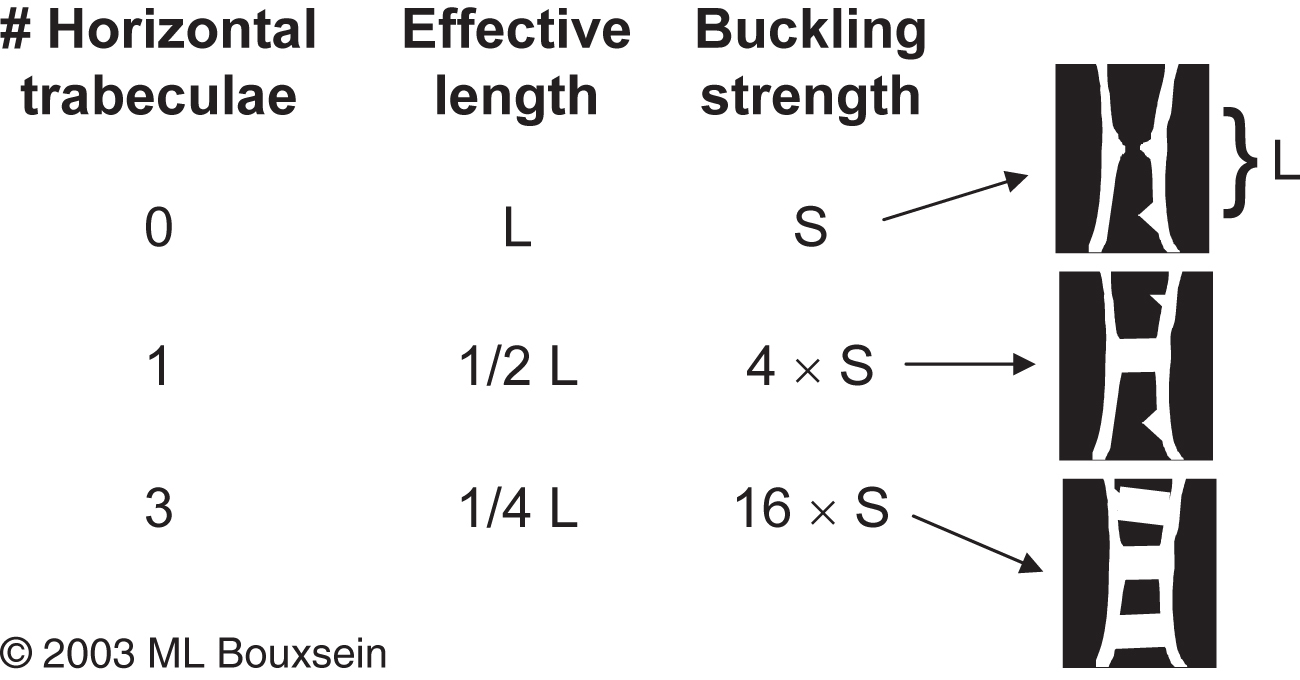

Bell proposed that isolated trabeculae may fail by buckling, which describes the failure mode of a long slender column. In this case the critical buckling load (or buckling strength) is proportional to the cross-sectional area of the column and to its elastic modulus and is inversely proportional to the square of unsupported length of the column (see Chapter 13 —Karim). Therefore loss of horizontal trabecular elements leads to a marked increase in the unsupported length of a trabecular strut, markedly decreasing its buckling strength. Conversely, preservation of one or more horizontal struts can profoundly influence trabecular bone buckling strength with very little change in bone mass. This concept is illustrated in Fig. 1.2 that shows the theoretical effect of adding one or more horizontal struts on trabecular bone buckling strength.

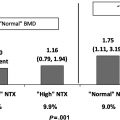

Another potential mechanism whereby trabecular bone strength declines with increased bone resorptive activity is the hypothesis that the presence of resorption cavities themselves serve as a site of local weakness where cracks in the trabeculae may initiate . van der Linden et al. evaluated this possibility using an analytical model of vertebral trabecular bone, wherein they induced a 20% decline in bone mass either by thinning the entire trabecular structure or by randomly introducing resorption cavities . They made two important observations: first, in both cases, the predicted decline in vertebral trabecular bone strength was larger (30% for trabecular thinning and 50% for introduction of resorption cavities) than the decline in bone mass. Second, the reduction in bone strength was greater when bone loss occurred by introduction of resorption cavities than by trabecular thinning. Altogether, these observations confirm the deleterious impact of high bone resorption even in the presence, but particularly in the absence, of increased bone formation on trabecular bone strength and provide a partial explanation for why small or even undetectable changes in bone mass due to antiresorptive therapy can have marked effects on vertebral fracture risk.

The importance of trabecular bone microarchitecture has been supported by in vivo assessment of bone microarchitecture, demonstrating altered trabecular and cortical microarchitecture in subjects with fragility fractures compared to age-matched controls with no fractures (see Chapter 16 —Biver).

Moreover, data from iliac crest biopsies and in vivo imaging obtained during clinical trials suggest that maintenance of trabecular architecture with antiresorptive therapy or improvement of trabecular architecture with teriparatide, abaloparatide, or romosozumab may contribute to the antifracture efficacy of these agents. Altogether, these clinical observations point to an important role of trabecular architecture in fragility fractures, particularly at skeletal sites rich in trabecular bone such as the spine.

This discussion has focused on trabecular microarchitecture and its role on bone strength as, and until the past decade, most studies focused on trabecular bone. Yet, 80% of skeletal mass is cortical bone, and unlike trabecular bone, loss of cortical bone is not self-limiting. If subject to imbalanced remodeling with resorption exceeding formation over a prolonged period, trabeculae will eventually disappear and bone loss will cease. In contrast the cortex is never completely lost and so can continue to lose bone with age. Using HR-pQCT, Zebaze et al. estimated the loss of cortical and trabecular bones from the distal radius of adult women and found that between 50 and 80 years of age, 68% of total bone mass lost at the distal radius was cortical, while only 32% was trabecular. Of the total amount of bone lost, only 16% was lost between ages 50 and 64 years, whereas 84% was lost after age 64 years and most of that was cortical, not trabecular. Cortical bone was lost primarily by trabecularization of the endocortical surface and increased cortical porosity. Indeed, in vivo imaging studies have shown cortical porosity increasing with age . Altogether, these findings may have important implications for the pathogenesis of fracture with advancing age, particularly at sites with significant amount of cortical bone, such as the hip.

1.3

Role of bone matrix properties

In addition to macro- and microarchitecture, features of the bone matrix itself influence bone mechanical properties, particularly in cortical bone. Characteristics of the bone matrix that may affect bone mechanical properties include (but are not limited to) the relative ratio of inorganic (i.e., mineral) to organic (i.e., water, collagen, and noncollagenous proteins); the degree of matrix mineralization; mineral crystal size and maturation; the extent and nature of collagen cross-links; the amount and type of noncollagenous proteins; and the amount and nature of matrix microdamage (see Chapter 13 —Karim).

1.3.1

Matrix mineralization

During the course of bone remodeling, the initial wave of resorption removes both matrix and mineral. The subsequent bone formation phase involves an initial laying down of organic matrix, with an initial component of mineralization occurring after the new matrix reaches a thickness of about 20 μm. Initially, mineralization proceeds at a rapid pace, the new bone achieving most of its ultimate mineral content within a few weeks. However, after perhaps two months, the rate of mineralization slows substantially and continues thereafter at a linear rate. It appears that the bone never actually becomes saturated with mineral, and that mineralization continues for at least 5 years , being interrupted only when a new wave of resorption occurs to remove that bone and start the process over again. Thus the rate at which new remodeling units are brought into play referred to as the “birthrate” of new remodeling osteons (estimated in biopsy material as the “activation frequency”) constitutes a primary mechanism by which bone mineralization is influenced (see Chapter 30 —Parfitt).

It is well established that the degree of matrix mineralization strongly influences the mechanical behavior of cortical and, to a somewhat lesser extent, trabecular bone . The elastic modulus and strength of cortical bone are positively related to the degree of matrix mineralization such that a modest 7% increase in tissue mineral density is associated with a threefold increase in bone stiffness and a doubling in breaking strength . Thus it seems inescapable that undermineralization would promote bone fragility. However, the ability of cortical bone to absorb energy may either increase (if the bone is relatively undermineralized to begin with) or decrease (if the bone is already fully mineralized) with increasing tissue mineral density .

Among the first efforts to assess the composition of human osteoporotic bone was that of Burnell et al. , who compared iliac crest biopsies from osteoporotic postmenopausal women with vertebral compression fractures to biopsies from normal controls. As expected, osteoporotic bone was less dense. However, the fraction of mineral per gram of bone tissue was also reduced. Moreover, within the mineral phase, carbonate and the calcium-to-phosphorus ratio were decreased, while sodium and magnesium content were increased, yet the same biopsies gave no hint of osteomalacia. Although these results describe average values for the entire study cohort, they reveal considerable heterogeneity in bone composition, even within this group of clinically homogeneous patients. Most patients had normal results; 25% showed undermineralized matrix, and only a few showed decreased matrix with normal mineralization. The subjects with decreased mineral fraction were those who also had an increased content of sodium and magnesium in the mineral phase, suggesting the presence of skeletal calcium deficiency.

Drug therapies that decrease bone turnover will eventually increase the degree of matrix mineralization by prolonging the period of secondary mineralization . In contrast, agents that increase bone turnover may lead to a transient decrease in the degree of matrix mineralization as new remodeling units are initiated and new bone is laid down. Thus iliac crest biopsies from postmenopausal women treated with antiresorptive therapy (calcium plus vitamin D, raloxifene, risedronate, or alendronate) showed an increase in the degree of mineralization that mirrors the suppression of bone turnover , whereas iliac crest biopsies from men treated with teriparatide showed a slight decrease in the degree of mineralization and both men and women treated with teriparatide showed an increase in the heterogeneity of mineralization . A decrease in mineralization density and an increase in heterogeneity of mineralization was also seen in postmenopausal women with osteoporosis who had been treated for 1 year with teriparatide following prior treatment with bisphosphonates . The SHOTZ trial compared the effects of 6 and 24 months of teriparatide or zoledronic acid treatment on matrix mineralization in postmenopausal women with osteoporosis. At both time points, mineralization density was higher with zoledronic acid than with teriparatide and the opposite was true for heterogeneity of mineralization .

Another aspect of matrix mineralization that may influence skeletal fragility is the spatial distribution and heterogeneity of mineralization. Individuals with vertebral fractures have a more heterogeneous distribution of mineralization density values than individuals of similar age without fractures . Thus some individuals with fractures had low mineralization density, while others had high mineralization density, resulting in a bimodal distribution in the fracture group, compared to the typical Gaussian distribution seen in a group of normal subjects. This finding suggests that the fracture group may have an impaired capacity to regulate bone remodeling to avoid these extremes of tissue mineralization that are likely to be sites of mechanical weakness. Additional data regarding heterogeneity of mineralization density are provided by evaluation of iliac crest biopsy specimens after osteoporosis therapy. In these studies the heterogeneity of mineralization density values increases following intermittent PTH therapy and decreases following bisphosphonate therapy , yet both the treatments are associated with reduced fracture risk. In addition, the mechanical properties of vertebral trabecular bone are unrelated to the heterogeneity of tissue mineralization . Thus although theoretical arguments suggest that increasing material homogeneity may negatively impact bone’s resistance to fracture, empirical evidence contradicts this view. Clearly, further studies are needed to unravel the complex relationships between material heterogeneity, skeletal fragility, and fracture risk.

1.3.2

Collagen characteristics

Bone is a composite material with two primary constituents, mineral and collagen. Although collagen has long taken a back seat to mineral with regards to skeletal fragility, mounting evidence indicates an important role for age- and disease-related changes in collagen content and structure . The majority of evidence suggests that in normal bone, the mineral provides stiffness and strength, whereas collagen affords bone’s ability to deform and absorb energy before fracturing . The dramatic fragility seen in osteogenesis imperfecta underscores the potential for collagen abnormalities to influence bone strength, though it is now recognized that tissue mineralization is altered in osteogenesis imperfecta as well . However, more subtle alterations in collagen, as noted by polymorphisms in the COL1A1 gene, have also been associated with fracture risk independent of BMD status . Posttranslational modifications of collagen have also been shown to influence bone mechanical properties . In particular, increased nonenzymatic glycation of collagen is associated with reduced ability of bone tissue to absorb energy before fracturing and has been implicated in the fragility associated with type 2 diabetes, in which individuals have normal to high BMD, but increased fracture rates . In sum, the specific contribution of collagen abnormalities to age-related skeletal fragility remains to be defined.

1.3.3

Microdamage

Throughout life, physiologic loading of the skeleton produces fatigue damage in bone. It appears that microdamage initiates activation of remodeling, which is triggered by osteocyte apoptosis (see Chapter 7 —Bonewald), presumably to repair the damaged tissue . This intriguing observation suggests that one important role of bone remodeling is to repair fatigue-induced microdamage in bone. It has then been hypothesized that excessive suppression of bone turnover may reduce the capacity of bone to repair microdamage and eventually lead to reduced mechanical properties . It has been suggested that osteonecrosis of the jaw and atypical femoral fractures may be caused by too little turnover but this has not been clearly established. Debate regarding the optimal level of bone turnover to prevent architectural deterioration while preserving the ability of bone to maintain calcium homeostasis, to respond to altered mechanical loading, and to repair microdamage is ongoing (see Chapter 31 —Kostenuik). Indeed, in normal dogs treated with high-dose bisphosphonate, the observed decrease in bone toughness is not related to increased microdamage . Furthermore, the mechanical behavior of vertebral trabecular bone is largely independent of the amount of preexisting microdamage . Interestingly, the ability of a material to undergo “microcracking” may actually increase its toughness . As a simple explanation for this latter phenomenon, consider that when a material with a crack in it is loaded, energy is accumulated at the tip of the crack. This energy can either be dissipated by growth of the crack, or by the generation of microcracks near the tip of the larger crack. In this latter case, growth of the larger crack is inhibited, and the material can absorb more energy (i.e., making it tougher) before this larger crack eventually progresses through the material to cause failure. The specific characteristics of bone that confer “good” microcracking versus “bad” microdamage remain to be elucidated. Altogether, the influence of physiologic levels of microdamage on skeletal fragility in vivo remains an open question .

1.4

Summary

At the beginning of this chapter, we discussed the limitations of a bone mass–based diagnosis of osteoporosis. A primary difficulty with such a definition is that its sensitivity to factors known collectively as “bone quality” has not been clarified, and it is tempting to attribute the diagnostic ambiguities of BMD measurements to their failure to account for these features. Although these concerns persist, the fact that information contained in the BMD measurement accounts in part for some of the important geometric, material, and microarchitectural properties solidifies its rationale as a diagnostic criterion. Certainly, any substantial degree of matrix undermineralization would be reflected in a lower BMD, and trabecular disruption of sufficient magnitude to be mechanically important would also register as a bone mineral deficit, and therefore as a lower BMD. Other features that would not be included in a BMD assessment include bone turnover, collagen characteristics, ultrastructural morphology such as cement lines, and the extent and type of accumulated fatigue damage.

The question remains whether osteoporosis should be viewed as one or more unique diagnostic entities, as is the case for Paget’s disease, or whether it is more useful to consider it a condition of skeletal fragility resulting from a stochastic process, in which contributory factors include age, body size, adequacy of peak bone mass, degree of adult bone loss, and accumulation of qualitative impairments, all of which could be influenced by genetic and environmental factors. Since the overall trajectory over time of adolescent bone acquisition and adult bone loss appears to be universal, the only basis for considering osteoporosis to be one or more distinct entities would be a demonstration that its qualitative abnormalities, such as those discussed previously, are restricted to those patients who have suffered from a fragility fracture. Although evidence remains incomplete, it seems unlikely that such specificity will be validated for most of these abnormalities.

References

Stay updated, free articles. Join our Telegram channel

Full access? Get Clinical Tree