Summary of Key Points

- •

Lung cancer is increasingly understood at the molecular level; N-of-1 trials are becoming more relevant with the use of biomarker assessment and targeted therapies.

- •

The failure of promising agents in randomized studies has prompted reconsideration of the standard dose-finding paradigm in early phase trials, with the recognition that improved drug development strategies for single agents and combination therapies are required.

- •

The choice of end points and trial design options in the phase II and phase III setting is driven by the purported mechanism of the action of the drug and the availability of a biomarker to “enrich” patient population leading to either (a) larger randomized phase II, phase II/III, or phase III trials (all-comers design with retrospective subgroup assessment), or (b) smaller (including nonrandomized) phase II trials in an enriched subpopulation targeting larger differences.

- •

Adaptive designs are becoming a reality with advances in mobile computing, electronic data capture, and integration of research records with electronic medical records.

- •

Master protocols incorporating a central infrastructure for screening and identification of patients who can be funneled into multiple subtrials testing targeted therapeutics have become an efficient way to conduct definitive trials in lung cancer.

- •

Newer approaches to clinical trial end points and design strategies that challenge the historical paradigm of drug development are critical to accelerate the drug development process so that the right therapies can be delivered to the right patients.

In oncology, the development of new therapeutics typically follows the phase I, phase II, and phase III drug development paradigm. In phase I, the primary goal is to understand the safety profile of a new treatment in a small group of patients before further investigation. In the phase II setting, the primary goal is to determine if there is an efficacy signal worthy of further investigation; a secondary objective is to gain a better understanding of the treatment’s safety. Phase II trials may have a single arm or may be a randomized trial in a homogeneous study population, with the trial size varying from less than 100 patients to as many as 300 patients. If the drug is considered safe and has a promising efficacy signal, then a phase III trial is initiated. The primary goal of the phase III trial is to compare the new treatment with the standard of care to demonstrate a clinical benefit or, in some cases, cost-effectiveness. Phase III trials are usually large, comprising a few hundred to thousands of patients, and they are conducted in a homogeneus group of patients at multiple institutions.

As biomarker assessment and use of targeted therapies increase in cancer treatment, N-of-1 trials—studies in which an individual is the single subject of study—are becoming more relevant. Biomarker assessment is a critical aspect of targeted therapy because biomarkers can identify patients who are more likely to benefit from a particular treatment. The Biomarkers Definitions Working Group defined a tumor marker or a biomarker as “a characteristic that is objectively measured and evaluated as an indicator of normal biologic processes, pathogenic processes, or pharmacologic responses to a therapeutic intervention.” In oncology, the term biomarker refers to a broad range of measures derived from tumor tissues, whole blood, plasma, serum, bone marrow, or urine. In principle, the pathway from basic discovery of a biomarker to its use in clinical practice is similar to a traditional drug development process, but there are some basic differences, which will be described here. An extensive guideline for reporting studies on tumor markers has been developed and published.

Biomarker-based trials deviate from the standard paradigm for developing a treatment or regimen. In the context of biomarkers, a phase I study tests the methods of assessing marker alteration in normal and tumor tissue samples. Results from this study may help to determine the cut points for quantitative assessment and meaningful interpretation of test results. The feasibility of obtaining the specimens, as well as the reliability and reproducibility of the assay, needs to be established at this stage. A phase II study is typically a careful retrospective assessment of the marker to establish its clinical usefulness. In phase III trials, the marker is prospectively evaluated and validated in a large, multicenter population that provides adequate power to address issues of multiple testing.

For a biomarker to be useful in clinical practice, its assay results should be accurate and reproducible (analytically valid) and its value should associate with the outcome of interest (clinically valid). Further, for a biomarker to be useful in clinical practice there must be a specific clinical question, proposed alteration in clinical management, and improved clinical outcomes (the so-called clinical utility). An elaborate tumor marker utility grading system was developed that defined the data quality or level of evidence needed for grading the clinical utility of markers. In brief, level I evidence, which is similar to a phase III drug trial, is considered definitive. Levels II to V represent varying degrees of hypothesis-generating investigations, similar to phase I or II drug trials.

The high failure rate of phase III trials in oncology, including lung cancer, may be attributable to several factors, including inaccurate predictions of efficacy based on the hypothesis-generating phase II trials; failure to identify an appropriate dose or schedule (the so-called optimal dose) in a phase I trial; or problems with the phase III trial design. Assessing the safety profile and establishing the maximum tolerated dose remain the primary focus of phase I trials, including trials of targeted therapies and vaccines. However, it is becoming more common for phase I trials to assess preliminary efficacy signals and identify the subsets of patients most likely to benefit from the new treatment. Tumor size response metrics based on longitudinal tumor size models are promising new end points in phase II clinical oncology studies; however, these metrics have not been validated yet for routine use in clinical trials. Response is being measured as progression-free survival instead of using the Response Evaluation Criteria in Solid Tumors (RECIST). Variants of progression-free survival, such as disease control rate at a predetermined time point, have been shown to be acceptable alternate end points for rapidly screening new agents in phase II trials in patients with extensive-stage small cell lung cancer and advanced-stage nonsmall cell lung cancer (NSCLC). For phase III trials, overall survival, defined as the time from random assignment or registration to death from any cause, is still the standard criterion end point because it is a measure of direct clinical benefit to a patient. As an end point, overall survival is unambiguous and can unequivocally assess the benefit of a new treatment relative to the current standard of care. Although improving overall survival remains the ultimate goal of new cancer therapy, an intermediate end point such as disease-free survival in early-stage disease has been used in the phase III setting to evaluate the treatment effect of new oncologic products. However, overall survival continues to be an appropriate end point in trials without an intermediate end point or validated surrogate end points, such as for phase III trials in extensive-stage small cell lung cancer and advanced-stage NSCLC.

This chapter is organized into sections that focus on the end points and design considerations for cytotoxic agents and targeted therapies for early phase, dose-finding trials; phase II trials; and phase III trials. Where possible, examples of ongoing or completed lung cancer clinical trials are used to explain the concepts. The chapter ends with a brief summary and a discussion of future perspectives on clinical trial design in lung cancer research.

Early Phase Trials

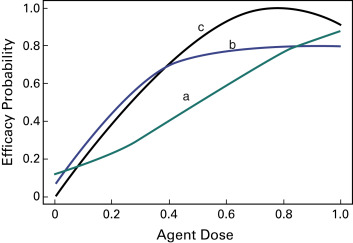

Historically, dose-finding trials in oncology have been designed to establish the maximum tolerated dose of a therapeutic regimen, with safety as the primary outcome. These trials, which are usually the first to test a new agent in humans, may include patients with multiple tumor types when no other treatment is available. A fundamental assumption of these designs is that toxicity and efficacy are directly related to dose, that is, the higher the dose, the greater the risk of toxicity and greater the chance of efficacy. Although this paradigm works for cytotoxic agents, it is not readily applicable for molecularly targeted therapies, vaccines, or immunotherapy. The postulated mechanisms of action for these agents are not straightforward because (a) the dose–efficacy curves are usually unknown and may follow a nonmonotone pattern such as a quadratic curve or an increasing curve with a plateau ( Fig. 59.1 ), and (b) dose–toxicity relationships are expected to be minimal.

With regard to targeted therapies, erlotinib, gefitinib, and bevacizumab have demonstrated clinical benefit in several cancers, including lung cancer, whereas others such as R115777 and ISIS 3521 have produced negative results. Problems with the design of phase I studies provide plausible explanations for the lack of clinical activity seen with these drugs, despite understanding the pathway of action for such drugs. The phase I studies were designed primarily to assess maximum tolerated dose, and the patients enrolled in these studies were unselected (e.g., all-comers vs. patients whose tumors express a specific molecular target). Consider, for example, an immunotherapy administered to stimulate the patient’s own immune system to fight the tumor. Overstimulation of the immune system could interfere with the drug’s efficacy or prove harmful for the patient.

Ideally, dose-finding studies for immunotherapies or targeted agents would include a secondary measure of efficacy to identify the biologically optimal dose or the minimum effective dose, instead of the maximum tolerated dose. However, barriers to measuring efficacy in early phase trials are the absence of validated assays or markers of efficacy, the time required to measure an efficacy outcome, and incomplete understanding of drug metabolism and its pathway. These limitations also preclude patient selection for phase I trials, although an enrichment strategy is being used more often—either during the dose-escalation or dose-expansion phase—to identify subsets of patients who are likely to benefit most from the treatment. Successful examples of using enrichment strategy in early phase trials include the development of vemurafenib to treat patients with melanoma positive for v-raf murine sarcoma viral oncogene homolog B ( BRAF ) mutation and crizotinib for patients with NSCLC-positive anaplastic lymphoma kinase (ALK) rearrangement.

Phase I trial designs can be broadly categorized as either model based or rule based (also called algorithm based). In the rule-based designs, small numbers of patients are treated starting at the lowest dose level. The decision to escalate, deescalate, or treat additional patients at the same dose level is based on a prespecified algorithm related to the occurrence of unacceptable dose-limiting toxicity. The trial is terminated once a dose level is reached that exceeds the acceptable toxicity threshold. The initial dose level often is derived from animal studies or trials conducted in a different setting. The interval between successive dose levels is usually based on a modified Fibonacci sequence. Examples of rule-based designs commonly used in oncology studies include the traditional cohorts-of-three design and its variants, the accelerated titration design, and the two-stage design.

The continual reassessment method introduced the concept of dose–toxicity models to guide the dose-finding process. The dose–toxicity model represents the investigator’s a priori belief in the likelihood of dose-limiting toxicity according to the delivered dose. The model is updated sequentially using cumulative patient toxicity data. Several modifications have been proposed to address the safety concerns associated with the original continual reassessment method, such as starting the trial at the lowest dose level, prohibiting skipping of dose levels during escalation, and requiring at least three patients at each dose level prior to escalation. The trials with a model-based design using the continual reassessment method have demonstrated better operating characteristics than trials with rule-based designs in simulation settings. Specifically, a higher proportion of patients are treated at levels closer to the optimal dose level, and fewer patients are needed to complete the trial. A key characteristic of all these designs, model based or rule based, is that they use only toxicity to guide dose-escalation and do not incorporate a measure of efficacy in the dose-finding process.

Current statistical approaches extend the standard continual reassessment method in two directions to allow toxicity and efficacy outcomes to be modeled in a phase I trial. One example is the bivariate continual reassessment model, which uses a marginal logit dose–toxicity curve and a marginal logit dose–disease progression curve with a flexible bivariate distribution of toxicity and progression. Other examples are the design proposed by Thall and Cook that uses efficacy–toxicity trade-offs to guide dose finding; the dose-finding scheme proposed by Yin et al. using toxicity and efficacy odds ratios; and bivariate probit models for toxicity and efficacy proposed by Bekele and Shen, and Dragalin and Fedorov. Another statistical approach assumes that the observed clinical outcomes follow a sequential order: no dose-limiting toxicity and no efficacy, no dose-limiting toxicity but efficacy, or severe dose-limiting toxicity, which renders any efficacy irrelevant. In this case, the joint distribution of the binary toxicity and efficacy outcomes can be collapsed into an ordinal trinary (three-outcome) variable, which may be appropriate in the setting of vaccine trials and viral-reduction studies in patients with human immunodeficiency virus, among others.

The design and end-point considerations are further complicated in the study of combination therapies. Ideally, the underlying biologic rationale for the combination would be known; for example, whether the efficacy of the two agents is additive, complementary, or synergistic. Understanding the interaction between the drugs may help investigators to anticipate whether the toxicity profiles of the agents are overlapping or additive. Typically, a set of predetermined dose-level combinations are explored based on either the maximum tolerated dose of one agent or other preclinical data demonstrating synergy. The dose of one agent under investigation is escalated, while the dose of the second agent remains constant until a tolerable combination dose level is achieved. Often it is unfeasible to explore all possible combination levels. Despite increased testing of combination treatments in oncology, few designs for dose escalation of two or more agents have been proposed. Gandhi et al. used a nonparametric, up-and-down, algorithmic-based sequential design to explore 12 dose combinations out of a possible 16 combinations. The maximum-tolerated-dose combinations were chosen based on the highest tolerated dose of each agent, achieving a targeted dose-limiting toxicity rate less than 33%. Two maximum-tolerated-dose combinations were identified using this design: 200 mg neratinib plus 25 mg temsirolimus and 160 mg neratinib plus 50 mg temsirolimus.

The failure of promising agents in randomized studies has prompted reconsideration of the standard dose-finding paradigm, with the recognition that improved drug development strategies for single agents and combination therapies are required. Although the assumption of a monotonically increasing dose–toxicity curve is almost always appropriate from a biologic standpoint, a monotonically increasing relationship between dose and efficacy has been challenged by the recent development of molecularly targeted therapies, vaccines, and immunotherapies. Model-based designs are certainly not perfect or recommended for every dose-finding study, but they can be an attractive alternative to the traditional algorithm-based, up-and-down methods. However, the application of these designs to dose-finding studies in oncology has been limited for considerable scientific and pragmatic reasons. The acceptance and use of these designs may be quicker and easier if they are developed in concert with a clinical paradigm.

Phase II Trials

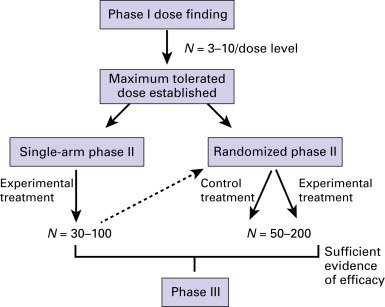

The primary objective of a phase II trial is usually to determine whether there is sufficient evidence of efficacy to warrant further evaluation of a treatment ( Fig. 59.2 ). Secondary goals of phase II trials are to establish which patients are most likely to benefit from this treatment and to evaluate toxicity in a larger study population. Phase II trials are often called screening trials, because they screen new agents or regimens for definitive evaluation in a phase III study. These trials usually include the minimum number of patients necessary to achieve the study goals and are relatively short in duration so that the experimental treatment can continue to phase III testing. As with any phase of testing, the study goals dictate the specifics of design and end-point selection.

End-Point Considerations

Generally, different end points are used for phase II and III trials of the same treatment. For example, although overall survival is the criterion standard outcome for phase III studies, it is not time efficient to use it in phase II studies. For a phase II trial to be informative, the selected end point should be a surrogate for the phase III outcome of interest, and the magnitude of changes in the surrogate end point should relate directly to changes in the main end point. Because clinically relevant outcomes depend on so many disease- and treatment-related variables, validation of a surrogate outcome is challenging.

As discussed earlier, commonly used end points for phase II trials in patients with advanced disease are response rate, progression-free survival, and disease control rate; for trials in patients with early-stage disease, disease-free survival is frequently used. The best outcome measure for a phase II study depends on the experimental agent’s mechanism of action. For example, response rate was often used for cytotoxic treatments that were expected to affect survival by shrinking the tumor or reducing overall tumor burden. Since the early 2000s, trials of cytostatic agents, which are thought to prolong survival by shrinking the tumor or stabilizing tumor growth, have been designed to measure progression-free survival or disease control rate. In fact, there is evidence that stable disease predicts overall survival better than tumor response does, which is a reason for including measures of disease stability in chemotherapy trials. The effect of agents that act on the immune system rather than directly on the tumor may not be adequately captured by the traditional measures defined by the RECIST; new criteria for immunotherapies have been developed.

The choice of a summary statistic for time-to-event outcomes, such as progression-free or disease-free survival, should also be disease and treatment related. The median times summarize the point at which the event of interest has occurred in at least 50% of study participants. A landmark time can be used to summarize a single time point, for example, the percentage of patients who are progression free at 6 months. The hazard ratio, which is a summary measure of the relative benefit found in the treatment arm averaged over the entire study period, places equal value on differences between the study arms at every point. For treatments expected to have a delayed effect, such as immunotherapies, the average may not represent the treatment’s efficacy; for these agents, a landmark time may be a better representation of the treatment effect. End points that may prove useful in the future are imaging-based measures or longitudinal biomarker measures.

Randomized and Single-Arm Designs

Since the early 2000s, phase II trials have shifted from single-arm to randomized design. In a single-arm study, all participants receive the experimental treatment and study results are compared with historical data from patients receiving the standard of care. Studies with a single-arm design typically have 90% power and 5% one-sided type I error, with sample sizes ranging between 20 patients and 100 patients. The validity of this type of study depends on the availability of accurate historical data for the patient population. Biased historical data have been blamed for numerous failed phase III studies after a positive phase II study. In addition, single-arm biomarker studies cannot differentiate between a prognostic and predictive association with the clinical outcome.

Randomized phase II studies avoid the problem of bias in historical data by directly comparing patients who have been randomly assigned to receive an experimental treatment or the standard of care. Randomized studies are typically two to four times larger than single-arm phase II studies and have larger error rates (e.g., type I error of at least 10%). A sufficiently powered, randomized phase II trial can differentiate between prognostic and predictive biomarkers.

Assessing Biomarker-Based Subgroups: Design Considerations

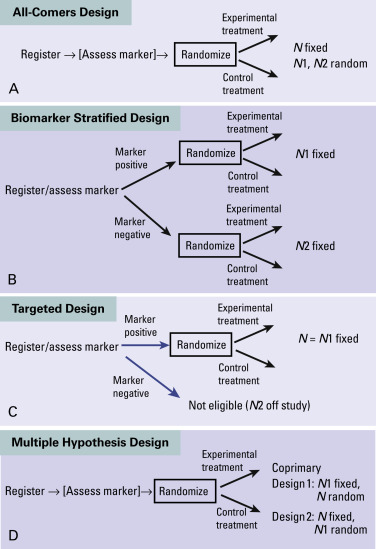

Information from phase II studies is often used to establish the patient population for a phase III study. Because therapies are being developed to target a specific biologic mechanism of the tumor or host, biomarker evaluation is an important consideration when designing a phase II study. Possible design choices are an all-comers design with secondary marker evaluation; a biomarker-stratified design with specific accrual targets within biomarker-defined subgroups; enrichment or targeted designs, which enroll only marker-positive patients; and multiple hypothesis designs, which specify both an overall population assessment and a subgroup assessment ( Fig. 59.3 ). Any of these designs may be used for the randomized phase II trial in an overall drug development strategy ( Fig. 59.2 ).

In an all-comers design with secondary marker evaluation, the marker can be evaluated either at registration or at the end of the study. When the marker is evaluated at registration, the random assignment can be stratified to ensure balance of the marker between the treatment arms. Secondary evaluation is a reasonable approach when multiple biomarkers are to be assessed and little is known about the markers. However, depending on the prevalence of a marker, studies using this approach may be unpowered. For example, if the prevalence of the marker is 10%, in a two-armed study of 100 patients with 1:1 randomization, there would be only about five patients per arm for subgroup evaluation.

In a study with a biomarker-stratified design, specific accrual targets are established per stratum, based on stratum-specific objectives. All patients are assessed for marker status at registration, and this design ensures adequate power to detect effects within biomarker subgroups. However, accrual for each stratum could differ substantially based on the marker prevalence, making this design impractical. In all cases, it is important to keep in mind that the apparent differences in effect estimates across subgroups could be due to random variation alone.

In studies with an enrichment or targeted design, only individuals with the biomarker are enrolled and studied. Although this approach may be the most efficient design to screen a biomarker–drug combination, this design provides no information about how the therapy performs in the population with marker-negative tumors.

A multiple hypothesis design specifies coprimary objectives for evaluating the treatment within a biomarker-defined subgroup and the overall study population, splitting the study-wide type I error between the two objectives. Determining the target sample size is a key distinction between an all-comers design and a multiple hypothesis design. If the multiple hypothesis study is designed to have a specific number of patients with marker-positive tumors ( N 1), then the total sample size is the number of patients ( N ) needed to accrue N 1 patients. Alternatively, if the study is designed around the entire study population ( N ), then the percentage of the study population that has marker-positive tumors determines N 1. In this design, marker status can be either evaluated at registration or during the study.

Any of these strategies for establishing biomarker-based subgroups may be used in a single-arm trial by simply assigning all registered patients to the experimental therapy. However, except for studies of patients with rare tumors and small biomarker subsets, a randomized phase II design is typically used, so that the prognostic value of the biomarker can be evaluated in the control arm. For example, in studies evaluating ALK inhibitors, it appears that patients who have tumors with the echinoderm microtubule-associated protein like 4 (EML4)–ALK fusion may also derive greater benefit from pemetrexed plus an ALK inhibitor than do patients who have tumors negative for the fusion.

Adaptive Designs

In its 2010 draft guidance, the US Food and Drug Administration defines an adaptive design clinical study as a study with a prospectively planned opportunity for modification of one or more of the study design features based on analysis of (usually interim) data from participants in the study. Examples of adaptations are modification of the randomization ratio between treatment arms, the study population, the treatment arms, and target treatment effect. A noteworthy example of an adaptive design study is the Biomarker-Integrated Approaches of Targeted Therapy for Lung Cancer Elimination phase II program, in which the randomization ratio is altered within biomarker-defined subgroups based on interim estimates of treatment efficacy within the treatment arm–subgroup combinations; the goal of the trial is to identify biomarker–drug combinations for further study.

The usefulness of adaptive randomization is the subject of debate. Parmar et al. have described an approach to expedite drug development by allowing investigators to drop and add treatment arms to an ongoing study. It is important to note that a group sequential design, which is a design using interim monitoring with prespecified rules for early stopping due to either efficacy or futility, is not an adaptive design because the design features are not modified based on analysis of study data.

Stay updated, free articles. Join our Telegram channel

Full access? Get Clinical Tree