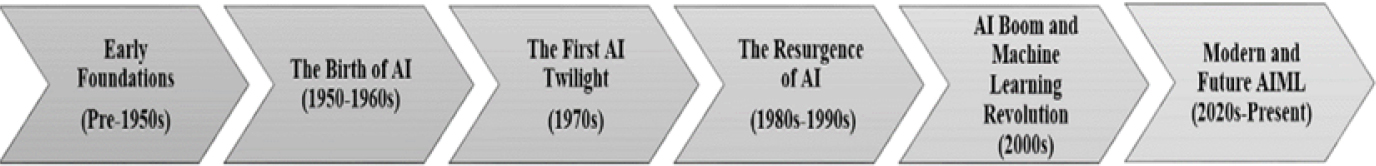

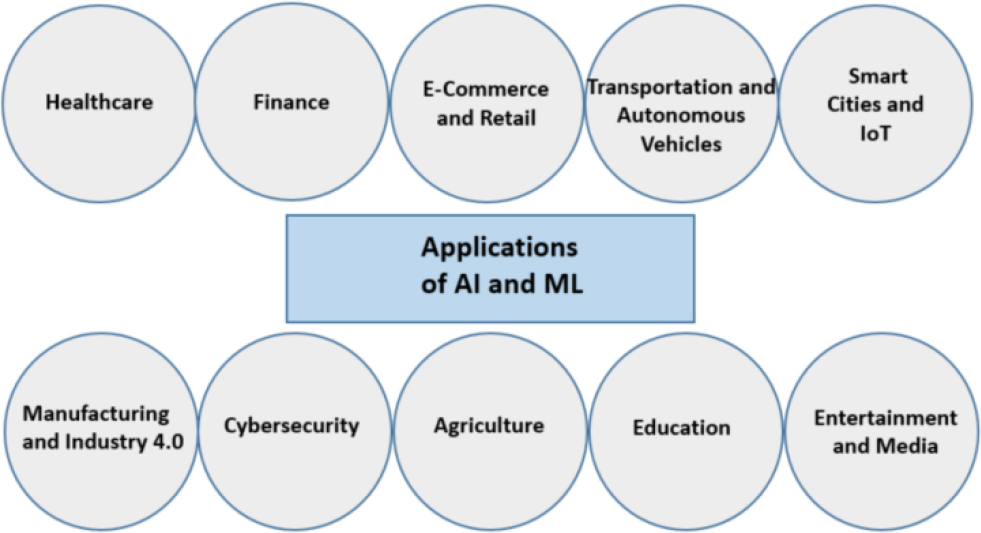

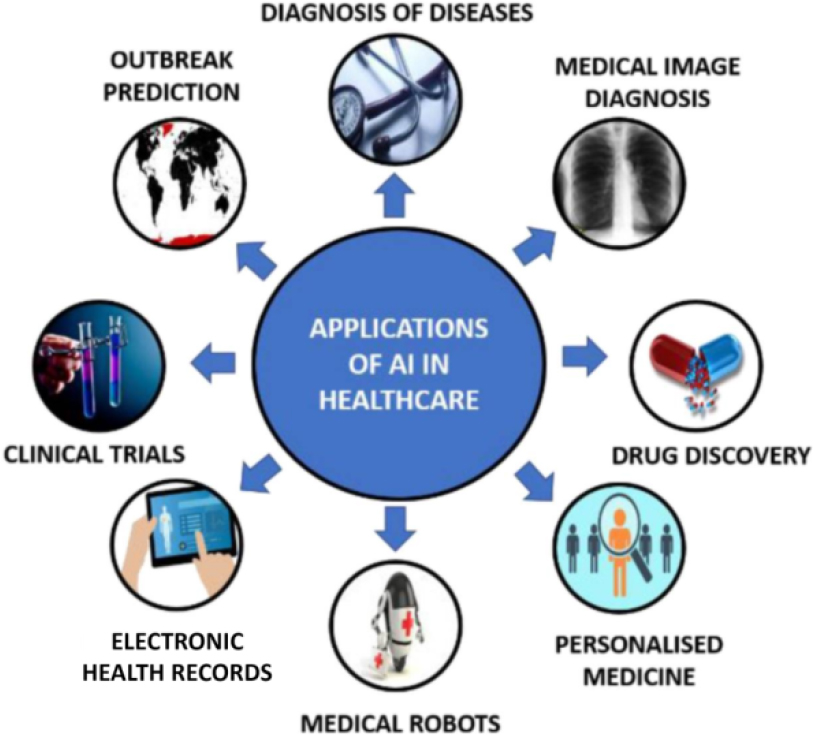

The current era in healthcare is facing a lot of challenges due to the large amounts of people requiring medical support. Artificial intelligence (AI), a revolutionary technique, when integrated with healthcare applications, increases the efficiency, accuracy and accessibility of delivering medical facilities. In this chapter, the foundation of AI in healthcare is described, which includes AI and machine learning (ML) in healthcare, AI-based medicine practices and the use of emerging technologies such as robotics, wearables and big data in the medical field. This chapter begins by discussing the concept of AI and ML. AI is a subdomain of computer science that enables the imitation of human intelligence by machines to learn, reason and solve problems. Principally, ML is a subset of AI consisting of tools for training algorithms to identify patterns and make judgments from data and algorithms. These ML technologies play a leading role in the healthcare field for diagnosis, treatment instruction, predictive analysis, personalized medicines, etc. As AI has the potential to transform the practice of medicine and the delivery of healthcare, the second section of this chapter provides an overview of the applications of AI in medicine. AI-emergent diagnostic tools in medicine such as deep learning models are more accurate than conventional methods for the detection of cancer, cardiac and neuro diseases. For treatment purposes, AI-driven decision support systems recommend plans of action leading to improvements in patient conditions. These AI-based medicine systems include medical imaging, natural language processing for clinical documentation and virtual health assistants. One very critical influence of AI in healthcare is its emerging and disruptive technologies, including robotics, wearables and big data analytics, which are discussed in the next segment of this chapter. In robotic-assisted surgery, medical robotics improves precision and reduces recovery time while enhancing surgical outcomes. Autonomous robots in hospitals can assist in routine tasks, including disinfection, monitoring and medication delivery. Wearable health devices, provided with AI-based analytics, constantly monitor vital signs to detect disease at the early stages of progression and encourage preventive healthcare practices. These enable patients to monitor their health condition in real time and shift healthcare from reactive to preventive models. Big data analytics in AI can extract relevant information from large chunks of medical data, help manage population health, conduct epidemiological studies and enhance the operational efficiency of healthcare institutions. By applying AI, it is expected that the healthcare sector will witness immense breakthroughs in terms of patient care, operational efficiency and medical research, which will enable a more intelligent and responsive health ecosystem. Contemporary medicine is experiencing a substantial change in terms of artificial intelligence (AI). For example, medical professionals are able to discover diseases and also design personalized treatment methods. The digitization of healthcare means that AI can function as a robust tool to enhance working efficiency while increasing patient healthcare outcomes and enabling data-driven decision-making (Murphy 2012; Russell and Norvig 2020). Furthermore, probabilistic approaches in machine learning (ML) help estimate clinical risks and uncertainties, enabling more informed decision-making in treatment planning. Medical advancements are occurring at a revolutionized pace thanks to AI. This is because it can achieve disease diagnosis at an early stage and create personalized treatment strategies. Healthcare is a mammoth field of complicated data, such as patient data, medical imaging data, genomic data and real-time tracking data (Raghupathi and Raghupathi 2014; Obermeyer and Emanuel 2016). The integration of AI with big data analytics also facilitates early disease prediction and real-time patient monitoring, significantly improving healthcare delivery. Conventional methods, which are prone to errors and need significant human resources, are generally used to analyze these types of data. ML and deep learning (DL) methods of AI have revealed a lot of promise in efficiently and rapidly processing such data with minimal human intervention (Krizhevsky et al. 2012; Goodfellow et al. 2016). The main driving factor of the use of AI in healthcare is the escalating demand for precision medicine. AI algorithms can be used by healthcare suppliers for tailoring treatments depending on the patient’s genetic makeup, lifestyles and past medicinal records (Collins and Varmus 2015). By lowering side effects and boosting therapeutic efficacy, this shift toward personalized medicine ultimately enhances patient care (Gulshan et al. 2016; McKinney et al. 2020). The application of AI in healthcare is also being driven by progressions in robotics, wearable technology and big data analytics. Complex surgeries are assisted by robots (Saeidi et al. 2021; Gamal et al. 2024), vital signs are continuously monitored by wearable technology (Ometov et al. 2020; Verma et al. 2023), and big data solutions extract valuable insights from massive datasets (Kapoor et al. 2021; Ahmed et al. 2023). Better patient tracking, early disease detection and efficient healthcare administration are all supported by these advancements. Although AI in healthcare can be transformative, there are particular issues concerning the privacy of patient data, ethics and regulation (Bostrom 2014; Russell 2019). Therefore, AI-driven solutions in healthcare must provide transparency, lack bias and possess ethical integrity in order for AI solutions to be successfully integrated. In addition, the incorporation of AI in healthcare is driving a move toward proactive and preventive care models. Through the use of predictive analytics and real-time data streams from wearables and electronic health records, AI systems can detect possible health risks prior to the onset of symptoms, allowing for timely interventions and lessening the burden on healthcare systems. These abilities are especially useful in the management of chronic illness and in public health monitoring, where early warnings can avoid complications or outbreaks. Robotic devices, now transitioning to semi-autonomous capabilities, are improving surgical accuracy and reproducibility, which is particularly crucial in minimally invasive surgery (Ometov et al. 2020; Kapoor et al. 2021; Saeidi et al. 2021; Ahmed et al. 2023; Gamal et al. 2024; Verma et al. 2023). Together, these technologies represent not only a technological shift, but also a fundamental redefinition of the delivery of healthcare, making it more personalized, efficient and anticipatory. A glimpse at patient care using key AI technologies is shown in Figure 18.1. As the healthcare industry is currently navigating myriad challenges, primarily driven by the accelerating demand for medical services from a burgeoning global population, AI has emerged as a transformative force, enhancing the efficiency, accuracy and accessibility of healthcare delivery. This chapter gives an insight into AI’s foundational role in healthcare, encompassing an overview of AI and ML in the first section and AI-driven medical practices in the second section. The application of emerging technologies such as robotics, wearables and big data analytics in the health sector is discussed in the next segment. This chapter ends with the conclusion of the role of AI in healthcare. Figure 18.1. AI-based healthcare system – a glimpse. Nowadays, owing to the rapid change in technology, different new technologies are emerging on the market day by day. AI is a buzzword that is creating a revolution by making intelligent machines. The term “artificial intelligence” was coined by John McCarthy, an emeritus professor of computer science at Stanford University. He defined it as: “AI is the science and engineering of making intelligent machines, especially intelligent computer programs” (McCarthy et al. 1956). Thus, in AI machines, they can think and solve complex problems with a human-like approach. For example, when a task is given to intelligent humans, they might stumble and learn from their errors. In the same fashion, AI is projected to tackle problems, make mistakes and learn from them as part of its self-improvement. AI usually copies humanoid brainpower features and applies them as procedures in a computer-friendly way. Generally, AI is divided into three categories: narrow AI, general AI and super-intelligent AI. Narrow AI, also called weak AI, is tailored for specific tasks, such as speech recognition (Siri, Alexa) or recommendation systems (Netflix, Amazon). General AI, also known as strong AI, is theoretical AI with human-like intellectual abilities and is capable of performing any cognitive task a human can do. Super-intelligent AI is a hypothetical form of AI that exceeds human intellect in all respects (Bostrom 2014). The vast AI domain broadly consists of two techniques – ML and DL as shown in Figure 18.2. In 1959, ML was defined by Arthur Samuel as: “machine learning is the field of study that gives computers the ability to learn without being explicitly programmed” (Samuel 1959). Thus, ML is a subset of AI capable of computing learning patterns from data and making decisions or carrying out predictions without being explicitly programmed. ML mainly focuses on developing algorithms that continuously improve the system performance through experience. DL is defined as: “deep learning refers to a set of algorithms in ML that attempt to learn layered models of input data, where each layer learns a representation at a different level of abstraction” (Goodfellow et al. 2016). DL is a subset of ML that uses artificial neural networks with multiple layers (deep neural networks) to model and extract complex patterns from large datasets. It enables computers to perform tasks such as image recognition, natural language processing (NLP) and autonomous decision-making with high accuracy. In short, AI is the more general idea of machines carrying out intelligent activities. DL is a subfield of ML that uses neural networks for more complex AI tasks, while ML is a subset of AI that enables machines to learn from data. AI is founded on a broad variety of academic fields such as mathematics, statistics, computer science, neuroscience and cognitive science. Mathematical concepts such as linear algebra, calculus and probability are used by AI systems for data processing and model optimization (Goodfellow et al. 2016). Knowledge representation and reasoning are essential elements of AI, which stores and processes information with the help of ontologies, rule-based expert systems and semantic networks (Russell and Norvig 2020). AI also uses search and optimization methods such as A* search, genetic algorithms and simulated annealing to resolve intricate complications effectively (Poole et al. 1998). ML, a key part of AI, enables systems to learn from data through supervised, unsupervised and reinforcement learning methods (Boole 1854). Through enabling systems to detect patterns in speech, text and images, DL and neural networks further strengthen AI capabilities, pushing computer vision and NLP to the next level (Goodfellow et al. 2016). In addition, AI ethics and safety have emerged as key issues addressing biases, fairness and transparency in decision-making (Bostrom 2014). Figure 18.2. AI techniques AI is constantly progressing and consolidating interdisciplinary research in order to build smart systems capable of reasoning, learning and self-adapting in order to solve sets of real-world problems. The interesting journey of AI from the initial stage to the present form is demonstrated in Figure 18.3. Figure 18.3. Evolution of AI The pre-1950s period is known as the early foundations of AI. The basis of AI and ML was proposed by Gottfried Wilhelm Leibniz in 1677 with the concept of a symbolic logic system that could represent the human mind, which merged mathematical and philosophical logics with human reasoning and computing. George Boole then introduced digital computing and Boolean algebra in 1854 (Boole 1854). The growth of AI was ignited in the 1990s by the introduction of the Turing machine, which served as the basis for theoretical computation (Turing 1936) and the first mathematical model of an artificial neuron (McCulloch and Pitts 1943). During the 1950 and 1960s, there was the birth of AI. Alan Turing proposed the Turing test for computer intelligence in 1950 (Turing 1950). The two most significant breakthroughs in the field of AI during that time were the first ML software to play checkers (Samuel 1959) and the Perceptron, an early neural network model (Rosenblatt 1958). John McCarthy, Marvin Minsky, Nathaniel Rochester and Claude Shannon coordinated the Dartmouth Conference in 1956, which marked the official starting point for the field of AI (McCarthy et al. 1956). A lack of progress and overpromising the reduced funding available led to the first winter of AI in 1970s. Neural network research saw its first hiatus in AI when it proved that a simple perceptron failed to solve the XOR problem (Minsky and Papert 1969). The UK’s funding cuts during this period (Lighthill 1973) and the launch of competing expert systems like MYCIN in 1974 further restricted the advancement of AI. From 1980 until the early 1990s, when rule-based AI gave way to statistical models, ML experienced a notable surge in growth as computing power increased. Rumelhart, Hinton and Williams used the backpropagation technique in 1986 (Rumelhart et al. 1986) to get around the limitations of neural networks that arose during the AI winter phase. The introduction of support vector machines (SVMs) (Cortes and Vapnik 1995) and random forests (Breiman 2001) in 1995 improved the categorization of tasks even more. AI and ML experienced a boom in the first decade of the 2000s. Big data, more processing power and DL helped AI make major developments. AI decision-making through reinforcement learning (Watkins and Dayan 1992), DL (Hinton et al. 2006) and AlexNet image recognition in 2012 (Krizhevsky et al. 2012) and human champions defeating Google’s DeepMind (Silver et al. 2016) were the groundbreaking advancements of this era. Contemporary AI (2020s-present) has introduced chatbots, self-driving cars and medical diagnosis systems. NLP had revolutionary impacts in 2020 (Brown et al. 2020). In addition, ethical AI and explainable AI (XAI) emerged (Russell 2019). Impending AI trends including quantum AI, general AI and AI regulation will be booming in the near future. AI has many advantages, and these have been witnessed in application areas other than those discussed above. In fields such as data entry, manufacturing and customer service, AI and ML can automate repetitive operations, boosting productivity and reducing human labor. AI-driven systems examine huge datasets to produce precise insights that assist companies in making well-informed choices, leading to better decision-making. AI makes it possible to create personalized user experiences, such as marketing, streaming and e-commerce recommendation systems. By outperforming humans at complicated tasks, AI-driven solutions can increase productivity across a range of sectors. AI models are highly accurate in identifying patterns and spotting abnormalities, which helps sectors such as healthcare (e.g. disease diagnosis) and finance (e.g. fraud detection). AI systems are also able to scale operations effectively and manage massive volumes of data without experiencing any appreciable cost increase. AI systems, in contrast to humans, can work nonstop, enhancing operational effectiveness and customer service. Business intelligence and forecasting benefit from ML’s ability to study massive amounts of data in order to spot patterns and generate forecasts. Figure 18.4. AI applications AI and ML are playing a revolutionary role in the industry of today by unlocking its potential in automation, decision-making and other capabilities in various domains, as shown in Figure 18.4. AI is transforming the healthcare sector by refining diagnostics, improving the accuracy of treatments, reforming administrative processes, etc., as shown in Figure 18.5. The diagnosis of various life-threatening diseases such as cancer, neurological disorders and tuberculosis is being achieved faster and more accurately with the help of AI and ML. Drug discovery, robotic surgery and personalized medicine are some other important applications of AI and ML in healthcare. Detecting scams, supporting customers, scoring credit and trading algorithmically are a few examples of AI and ML applications in the finance sector. In e-commerce and retail, predicting inventory requirements and supply chain optimization is also being carried out with AI and ML. Searching images, supporting customers and recommending products, movies, music, etc., are all possible with an AI-powered visual search, recommendation systems, virtual assistants and chatbots. AI and ML help to improve urban planning by optimizing traffic movement and reducing congestion. The major applications of AI in this domain include self-driving cars and preventive maintenance of vehicles. The integration of AI and ML with the Internet of Things (IoT) has seen remarkable achievements in the creation of smart cities using smart energy management, energy consumption optimization, surveillance and security, recycling and disposal of waste. In Industry 4.0, the predictive maintenance of machinery failure is being done with AI and ML to lower downtime and maintenance costs. In quality control, AI-powered systems detect faults in production lines. Warehouse management, including assembly lines and packaging, is achieved automatically with the help of AI-driven robots. AI and ML identify and prevent cyber threats, such as malware and phishing attacks. AI also detects unusual activity patterns to prevent identity theft. In addition to this, AI facilitates deepfake detection, in which AI tools can identify manipulated videos and images used for misinformation. Currently, agriculture uses AI and ML to analyze soil, weather and crop data for precision farming. AI also identifies plant diseases and recommends treatment. For automated harvesting, AI-driven robots find applications in picking and sorting crops. Furthermore, in the field of education, AI can tutor, and with personalized learning, it can adapt lessons based on a learner’s progress and learning style. Teacher’s time is saved as AI can grade assignments and exams. In addition, language barriers can be bridged using AI-driven tools such as Google Translate. For entertainment and media content creation, AI generates images, videos and music. It also automatically edits and adds effects to enhance video production. In gaming, AI boosts the game environment and non-player character (NPC) behavior. Figure 18.5. Applications of AI in healthcare (Pandya et al. 2021). In the healthcare sector, AI includes a range of technologies, such as ML, DL, NLP and robotics, each of which plays an important role in contemporary medical breakthroughs. ML and DL examine the huge amount of data in regard to medical details in order to pinpoint patterns, support early ailment detection, as well as individualized treatment protocols. NLP helps in the processing and comprehension of medical texts by using AI, supporting the management of electronic health records (EHRs), clinical documentation and auto-patient communications. Robotics provide support for surgeries that are intricately complex, as well as rehabilitation therapies and hospital automation.

18

Foundation of Artificial Intelligence in Healthcare

18.1. Introduction

18.2. Overview of AI

18.3. AI applications in medicine: literature review

Stay updated, free articles. Join our Telegram channel

Full access? Get Clinical Tree